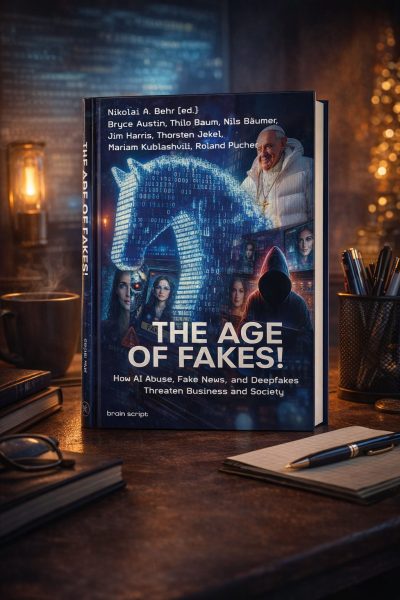

THE AGE OF FAKES!

How AI Abuse, Fake News, and Deepfakes Threaten Business and Society

Why this book matters now

Artificial intelligence has lowered the cost of deception. Synthetic media, deepfakes, and AI-generated narratives are increasingly used to manipulate markets, reputations, and decision-making processes. Organizations are no longer dealing with isolated incidents—but with systemic risks that affect leadership, compliance, and trust.

The Age of Fakes!

Artificial intelligence has transformed how information is created, distributed, and consumed. At the same time, it has radically lowered the barriers to manipulation. Deepfakes, AI-generated misinformation, and synthetic media are no longer fringe phenomena. They already influence financial decisions, damage reputations, enable cybercrime, and undermine trust in institutions, markets, and democratic processes.

The Age of Fakes! examines AI-driven deception not as a technological curiosity, but as a systemic risk for organizations and society. The book explores how fake news, deepfakes, and algorithmic manipulation operate across business, politics, media, and security—and why traditional technical countermeasures alone are no longer sufficient.

Edited by Dr. Nikolai A. Behr, the volume brings together perspectives from cybersecurity, communication science, management, law, and security policy. Rather than focusing on sensational incidents, the contributors analyze underlying mechanisms: how narratives are engineered, how emotional vulnerabilities are exploited, and why decision-makers increasingly operate in environments where authenticity can no longer be taken for granted.

A central theme of the book is trust—how it is eroded, weaponized, and ultimately becomes a strategic asset. The Age of Fakes! shows why leadership, communication competence, media literacy, and governance are now core responsibilities for executives, boards, and public institutions dealing with AI-driven risks.

Written for an international professional audience, this book offers orientation, analytical depth, and strategic context for anyone who needs to make informed decisions in the age of artificial intelligence.

Inside the Book – Perspectives and Contributions

Jim Harris

Jim Harris focuses on the convergence of deepfake technology and cybercrime. He shows how AI-driven fraud, voice cloning, and synthetic video already cause significant financial damage—and how responsibly designed AI systems can also support detection, prevention, and organizational resilience.

Bryce Austin

Bryce Austin examines the growing gap between rapid AI development and the ability of legal systems to regulate it. Using international examples, he explains why existing AI laws struggle with opacity, hallucinations, and accountability—and why new regulatory thinking is urgently needed.

Nikolai A. Behr

Nikolai A. Behr invites you to join him on a historical and personal journey through the evolution of deception, from ancient times to the present day. A historical cross-section shows us that the methods of deception evolve. However, the human tendency to deceive and be deceived remains.

Who this book is for

- Executives, board members, and senior leaders

- Compliance, risk, governance, and cybersecurity professionals

- Corporate communicators and public affairs specialists

- Policymakers, advisors, researchers, and educators

The Age of Fakes! helps organizations and leaders understand AI-driven deception before they are forced to react to its consequences.

What readers gain

- Understand how AI-driven disinformation works in practice

- Identify organizational and leadership vulnerabilities

- Recognize limits of purely technical countermeasures

- Gain strategic orientation beyond headlines and hype

Jim Harris hits the mark by emphasizing that self-regulation is essential in an unregulated digital world. Individuals and corporations must erect their own guardrails against the dangers posed by AI through education, training, and vigilance.

Editor-at-Large at The National Post (Canada) & best-selling Substack author

Key areas covered

- AI abuse, fake news, and deepfakes as systemic risks

- Cybercrime, executive fraud, and social engineering

- Media manipulation and reputational damage

- Governance, compliance, and regulatory challenges

- Leadership, communication, and resilience in the AI age

This intriguing chapter highlights the significant risks associated with AI. But it is more than just a theoretical look at the issues of cybercrime. It is a practical, far-reaching and expansive review that should be mandatory reading for every person as AI permeates every facet of society and business.

Globally Recognized Futurist & Multiple Time Bestselling Author on the Future of Work and Artificial Intelligence

Written for professionals who carry responsibility

- Board members and executives

- Compliance, risk, and governance leaders

- Cybersecurity and IT decision-makers

- Corporate communication and public affairs

- Policymakers, advisors, and researchers

This is an excellent book that will arm you with tactics and techniques to create a personal and professional career loaded with successful outcomes.

High Point University – #1 Best-Run College in the Nation (The Princeton Review)

Not alarmist. Not technical-only. Strategic.

The Age of Fakes! does not focus on panic or isolated scandals.

It provides analytical depth, interdisciplinary insight, and a leadership-oriented perspective on AI-driven deception.

Book details:

- Title: THE AGE OF FAKES! How AI Abuse, Fake News, and Deepfakes Threaten Business and Society

- Editor: Dr. Nikolai A. Behr

- With contributions from: Bryce Austin, Thilo Baum, Nils Bäumer, Jim Harris, Thorsten Jekel, Mariam Kublashvili, Roland Pucher, Nikolai A. Behr

- Publisher: brain script

- ISBN: 978-3-9828010-0-1

- Format: Softcover

- Number of pages: 268

- Price: 24,99 USD

FAQ – The Age of Fakes!

What is The Age of Fakes! about?

Why is this book relevant for business leaders today?

Who should read this book?

Does the book focus on technical AI or leadership implications?

Is this book alarmist about artificial intelligence?

What makes this book different from other AI books?

Does the book include practical tools or only theory?